Neither approach captures all redundancies, however. Common backup systems try to exploit this by omitting (or hard linking) files that haven't changed or storing differences between files. In the case of data backups, which routinely are performed to protect against data loss, most data in a given backup remain unchanged from the previous backup. It is most effective in applications where many copies of very similar or even identical data are stored on a single disk. Storage-based data deduplication reduces the amount of storage needed for a given set of files. Examples are CSS classes and named references in MediaWiki. In computer code, deduplication is done by, for example, storing information in variables so that they don't have to be written out individually but can be changed all at once at a central referenced location. Deduplication is often paired with data compression for additional storage saving: Deduplication is first used to eliminate large chunks of repetitive data, and compression is then used to efficiently encode each of the stored chunks. With data deduplication, only one instance of the attachment is actually stored the subsequent instances are referenced back to the saved copy for deduplication ratio of roughly 100 to 1. Each time the email platform is backed up, all 100 instances of the attachment are saved, requiring 100 MB storage space. Whereas compression algorithms identify redundant data inside individual files and encodes this redundant data more efficiently, the intent of deduplication is to inspect large volumes of data and identify large sections – such as entire files or large sections of files – that are identical, and replace them with a shared copy.įor example, a typical email system might contain 100 instances of the same 1 MB ( megabyte) file attachment. While possible to combine this with other forms of data compression and deduplication, it is distinct from newer approaches to data deduplication (which can operate at the segment or sub-block level).ĭeduplication is different from data compression algorithms, such as LZ77 and LZ78. Ī related technique is single-instance (data) storage, which replaces multiple copies of content at the whole-file level with a single shared copy. Given that the same byte pattern may occur dozens, hundreds, or even thousands of times (the match frequency is dependent on the chunk size), the amount of data that must be stored or transferred can be greatly reduced. Whenever a match occurs, the redundant chunk is replaced with a small reference that points to the stored chunk. These chunks are identified and stored during a process of analysis, and compared to other chunks within existing data.

The deduplication process requires comparison of data 'chunks' (also known as 'byte patterns') which are unique, contiguous blocks of data.

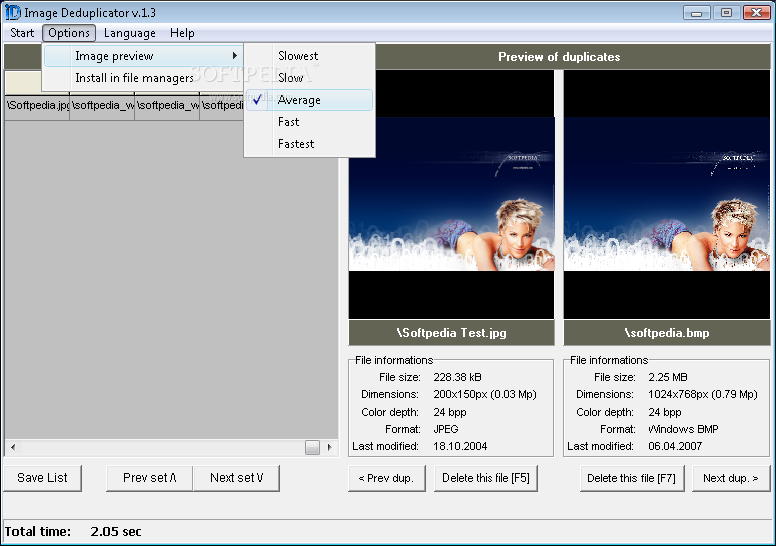

It can also be applied to network data transfers to reduce the number of bytes that must be sent. Successful implementation of the technique can improve storage utilization, which may in turn lower capital expenditure by reducing the overall amount of storage media required to meet storage capacity needs. In computing, data deduplication is a technique for eliminating duplicate copies of repeating data. Data processing technique to eliminate duplicate copies of repeating data

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed